PerfHr journal essay

From KRAs to Outcomes: Building Accountability Without Micromanagement

KRAs stay decorative until they are translated into visible signals, review rhythm, and manager intervention. Accountability gets calmer when expectations become explicit and reviewable.

Why this matters

Why the current design fails long before leaders call it a problem

Most teams do not ignore KRA-based accountability; they smother it with documentation. The administrative version of the work looks busy because there are forms, dashboards, templates, or review meetings in motion, but the control version is missing. Once that happens, leaders speak about responsibility while employees still cannot see what success looks like week to week and the room slowly fills with updates that sound responsible without changing what managers do next.

The visible symptom is usually not a spectacular failure. It is drift. Numbers start moving without explanation. Managers spend more time defending why something happened than deciding what should happen now. Team members can repeat the language of the system, but they still cannot tell you which signal matters most, who owns the correction, and when the issue will be reviewed again.

That is why the goal is not more hygiene. The goal is expectations that are visible enough to coach, fair enough to defend, and concrete enough to close. A real operating model reduces narrative, narrows attention to the few signals that matter, and gives managers permission to act on the basis of evidence instead of atmosphere. When the model is designed this way, it becomes a weekly control surface rather than an annual compliance ritual.

- Three to five KRAs per role

- One visible signal per expectation

- One manager intervention path when drift appears

Design logic

What the system must do before anyone trusts the model

A usable design does three things at once. First, it narrows the signal set so managers do not drown in noise. Second, it connects each signal to a decision point inside the same reporting rhythm. Third, it names an accountable owner who can explain movement, propose action, and close the loop without waiting for a committee to bless the next step.

In practice, that means the system has to survive contact with real work. People will use it on rushed Mondays, before investor calls, during hiring spikes, and in weeks when managers are already overloaded. If the design depends on perfect data, long explanatory notes, or specialist support every time the number moves, it is already too fragile for the environment it is supposed to govern.

The simpler way to think about it is this: the design should help a leader enter the manager accountability conversation, scan the situation in minutes, ask better questions, and leave with fewer unresolved issues than they entered with. When the room can do that consistently, teams start trusting the structure because it is visibly reducing confusion instead of creating more of it.

Quick diagnostic

Accountability calibration quiz

Check whether your current KRA system creates clarity or just abstract pressure.

Can an employee explain what evidence proves they are on track this week?

What does the manager do when a KRA drifts?

What keeps the accountability conversation fair?

Build the model

How to build the model so managers actually use it

Start with the minimum set of decisions the manager must be able to make with confidence. This is the opposite of a brainstorming exercise. You are not asking what could be measured; you are asking what must be visible for a manager to coach, unblock, escalate, or reallocate work. That single question prevents the design from turning into a catalogue of metrics that look impressive but never shape behaviour.

Next, force the model to separate controllable activity from quality standards and final outcomes. This is where many teams blur the layers and create unfair management conversations. If a number can only move over a quarter, it cannot be the only signal used in a weekly coaching discussion. If a quality standard matters, it must be explicit enough that two different managers would interpret it the same way in the same review.

Finally, make the design speak the language of the business instead of the language of policy. The fastest way to kill adoption is to introduce a technically correct framework that feels foreign to operators. Managers should be able to point to the signal, connect it to the work, and explain what good looks like in plain language. When they cannot do that, adoption stalls even if the framework is conceptually right.

- Translate role language into observable work output

- Choose signals the manager can review in the actual cadence

- Define what intervention looks like before performance drops too far

Operating cadence

What leaders and managers should review in every cycle

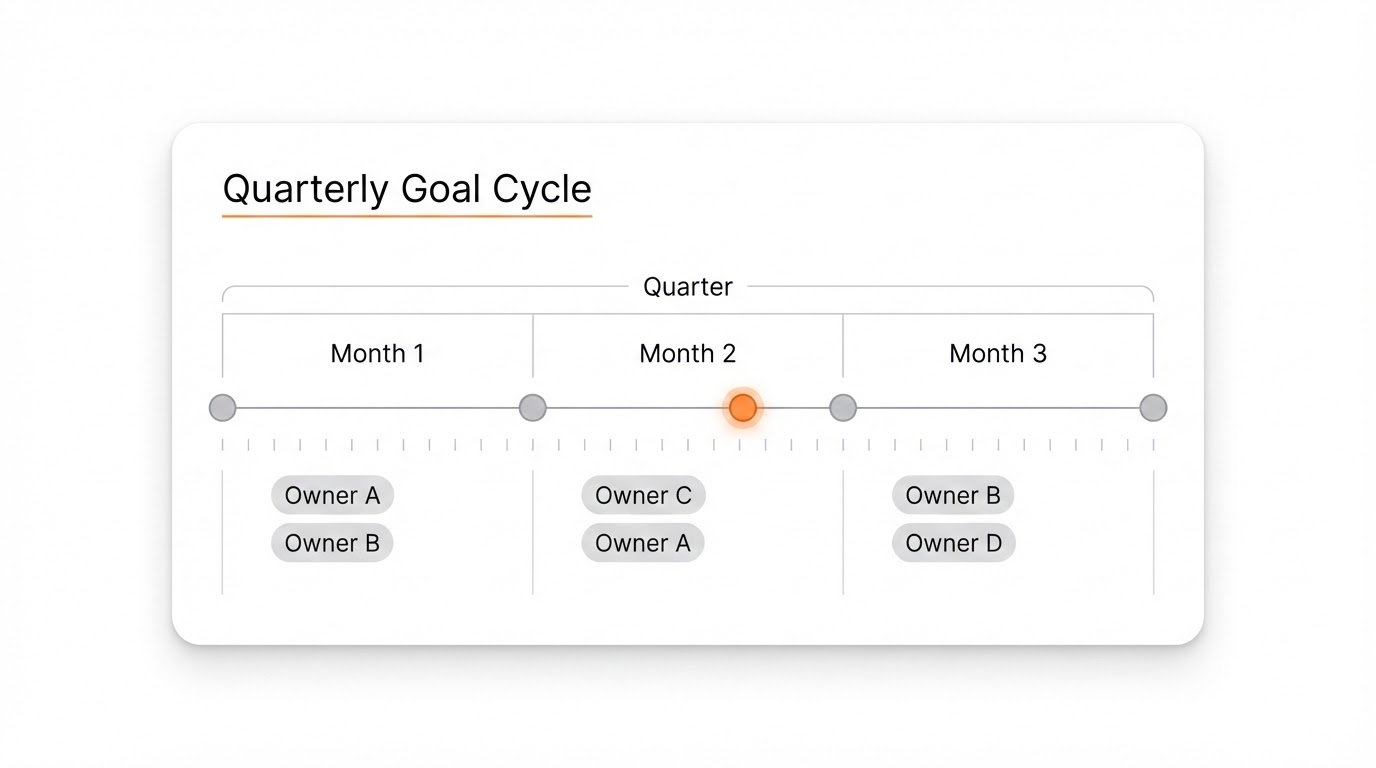

A good review cycle is not a calendar event; it is a conversion point where signal becomes intervention. The rhythm needs to create a consistent path from evidence to action, otherwise the organisation becomes excellent at describing slippage and poor at correcting it. For most teams, the answer is a short weekly loop for movement and a slower monthly loop for pattern recognition, capability building, and structural fixes.

Inside the weekly room, the discipline is to keep the conversation narrow: what moved, why it moved, who owns the response, and what closure looks like before the next cycle. That sounds obvious, but most review rooms abandon the sequence halfway through. They jump from movement to opinion, or from risk to general commentary, and the issue leaves the room with no named action and no visible due date.

Monthly reviews should then look above the individual issue. This is where leadership asks whether the same problem is repeating across managers, functions, roles, or workflows. Without that second layer, teams become reactive and chase recurring symptoms. With it, the organisation gradually strengthens the underlying operating system instead of dealing with each week as a fresh emergency.

- Which expectation is most at risk this cycle?

- What evidence proves the gap is real?

- What support or correction is the manager accountable for next?

Manager behaviour

How managers should talk when the model is working properly

The first visible sign of a healthy system is usually a change in manager language. Weak systems produce vague questions like what is going on, why is this still happening, or can we all pay more attention here. Strong systems produce narrower prompts: which signal moved, what changed in the work, who owns the response, and when will we know it is closed. That shift sounds small, but it changes the quality of management immediately.

Managers also need to learn how to avoid accidental overreach. When a framework is new, some leaders react by asking for more commentary, more evidence, and more narrative because they are still building trust in the system. That usually backfires. The better move is to ask for the minimum proof required to make a decision. If the system keeps demanding essays, it will slowly teach people that presentation skill matters more than operating clarity.

Finally, managers have to model consistency. Teams watch how leadership behaves in the first few cycles. If one manager follows the structure but another keeps improvising side requests, the system fragments quickly. The organisation should therefore coach the management population on what good questions sound like, what evidence is sufficient, and what the room must not become when pressure rises.

- Expectation should appear as a live manager prompt, not as documentation jargon.

- Visible signal should appear as a live manager prompt, not as documentation jargon.

- Review cadence should appear as a live manager prompt, not as documentation jargon.

- Intervention and closure should appear as a live manager prompt, not as documentation jargon.

Where teams go wrong

The mistakes that make the model look sophisticated and perform badly

The most common error is volume. Teams add measures because they fear missing something, but the result is a system where nothing is clearly important. People then revert to intuition, hierarchy, or recency bias because the formal model is too noisy to help. The second error is ambiguity: terms like ownership, quality, readiness, or closure sound aligned until a real issue appears and every manager interprets them differently.

Another frequent mistake is treating the framework as a communication artefact instead of a control device. In that version, the slides look polished, the documentation reads well, and the managers can present the structure, but nobody has changed the review behaviour underneath it. The moment pressure rises, the organisation falls back to ad hoc conversations because the designed rhythm was never embedded strongly enough to survive a difficult week.

A final failure mode is leaving managers alone with the framework after launch. Most systems die in the transition from design to use. People need coached examples, observed review rooms, and a visible standard for what good management looks like inside the new rhythm. If the business does not create that bridge, even a well-designed model gets blamed for adoption problems that are actually implementation problems.

- Confusing KRAs with broad aspirations

- Leaving managers to invent standards on the fly

- Using accountability language only after problems become serious

Proof of adoption

How to tell whether the model is becoming part of the operating system

The cleanest proof is behavioural, not aesthetic. Managers should start arriving at reviews with shorter explanations and clearer actions. Team members should be able to explain the signal set without referring back to a deck or a policy sheet. Repeated issues should either close faster or escalate more cleanly because the decision path is no longer ambiguous. Those are the signs that the model is entering daily use.

Another useful signal is reduction in parallel systems. When the core model is working, people stop maintaining shadow trackers, side spreadsheets, or private narrative notes that only exist because the official system cannot answer basic management questions. Shadow systems are often the earliest warning that adoption is weaker than leadership believes. If they keep appearing, the formal design is still not trusted enough under pressure.

Leadership should also test the system across different managers, not only with the strongest operators. A model that works only when an exceptional manager runs it is not yet a business system. A mature design can travel across contexts because the prompts, definitions, and closure logic are explicit enough to survive variation in style. That portability is what turns a good framework into a scalable one.

- Managers ask shorter and sharper questions.

- Issue closure becomes visible across cycles.

- Shadow trackers and side systems begin to disappear.

Next 30 days

What to do in the next 30 days if you want the model to hold

Week one is about reduction, not expansion. Strip the model back to the few signals that change the manager conversation, name owners, and decide the meeting where each number will be reviewed. Week two is about calibration: run the room, observe where people still narrate instead of decide, and tighten the prompts until evidence reliably leads to action.

Week three is where many organisations either institutionalise the change or lose it. This is the moment to document the minimum standard for the room, record examples of good interventions, and make sure leadership is reinforcing the same logic instead of improvising a parallel process. If senior leaders ask for different views every week, the new system will never stabilise.

By week four, you should be able to answer three questions clearly. Are the right signals visible? Are owners being named without ambiguity? Are actions closing before the next cycle? If the answer to any of those is still no, do not add more complexity. Tighten the operating discipline first. Durable control comes from repeatability, not from a bigger framework.

- Pick one role and rewrite KRAs as visible weekly or monthly signals

- Train managers to run one intervention conversation from the signal

- Review whether the new visibility changed coaching quality